See everything.

Train on anything.

RAPTOR is a real-time AI detection, classification, and targeting system that runs on a $250 edge computer. Operators correct the AI in the field and retrain custom models—no cloud, no PhD, no datacenter.

Real-time AI-Powered Recognition, Analysis & Persistent Tracking for Observation on Robots

The only field-retrainable AI vision system at the edge

Enterprise competitors cost 10–100× more, lock you into proprietary hardware, and can't learn new threats in the field. RAPTOR can.

Train in the field

Operator corrects a misdetection. RAPTOR learns. Retrain custom models on-device without sending data anywhere. The AI gets smarter every mission.

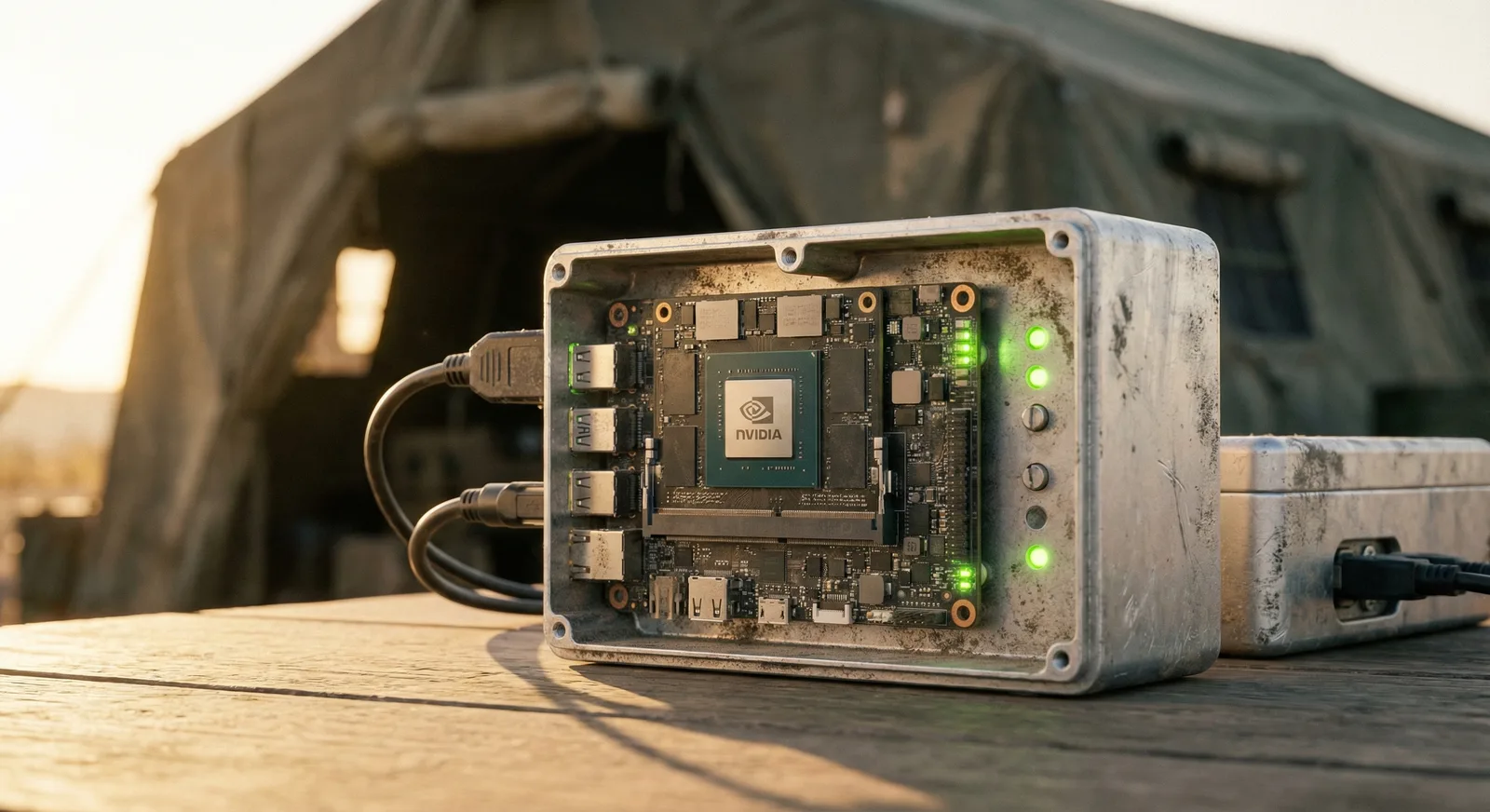

$250 compute module

Runs on NVIDIA Jetson Orin Nano. Full GPU-accelerated inference at a fraction of enterprise pricing. Shield AI charges $100K+. Anduril charges millions.

Any platform

UGVs, FPV drones, fixed-wing UAVs, multirotor platforms, or fixed installations. One AI system, every form factor. MAVLink, PX4, and DJI compatible.

Zero cloud dependency

Everything runs on-device. Detection, tracking, retraining, targeting. Works in DDIL environments where connectivity is denied, degraded, or non-existent.

Real-time inference

YOLOv8 + BoT-SORT multi-object tracking at 15+ FPS with tracking, 30+ FPS with TensorRT. Low enough latency for FPV terminal guidance.

Human in the loop

Operators classify, correct, and designate targets through an intuitive web UI. RC override at any time. E-STOP always available. AI assists, humans decide.

One system. Every platform.

RAPTOR deploys across the full spectrum of unmanned and fixed systems.

🛡️ Unmanned Ground Vehicles

Autonomous threat detection and target following for ground robots. MAVLink integration steers toward designated targets. RC override at any time.

- ✓ Auto-steer toward designated targets

- ✓ ArduRover / ArduPilot compatible

- ✓ Manual/Guided mode switching via RC

🎮 FPV Drones

AI-powered terminal guidance for first-person-view platforms. Lock a target, and RAPTOR maintains tracking through jamming and signal loss—the capability Ukraine proved in combat.

- ✓ Visual target lock survives RF jamming

- ✓ 60g Jetson module fits 5" frames

- ✓ Lightweight headless mode for payload constraints

✈️ Fixed-Wing UAVs

Persistent ISR with automatic detection alerts. RAPTOR watches the feed so the operator doesn't have to stare at a screen for hours. Aerial-perspective models trained on VisDrone and DOTA datasets.

- ✓ Auto-alert on detection events

- ✓ Bandwidth-adaptive streaming

- ✓ Loiter pattern generation

🏗️ Fixed Installations

Perimeter security, checkpoint overwatch, and FOB protection. Multi-camera coverage on a single Jetson. 24/7 operation with thermal/IR for night.

- ✓ Multi-camera array management

- ✓ Day/night auto-switching models

- ✓ Alert escalation and alarm integration

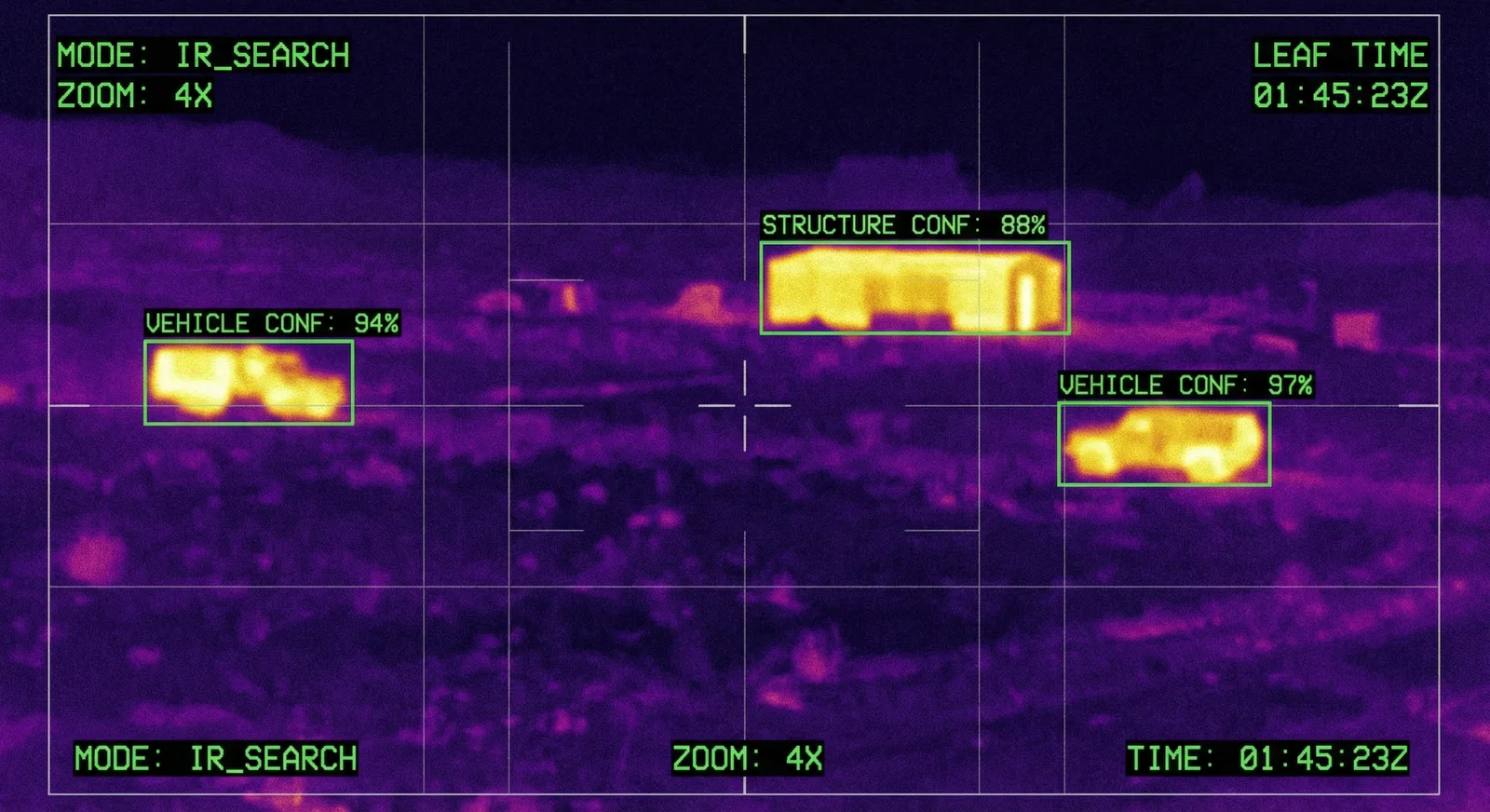

What RAPTOR does

Detection & Tracking

- 01 Real-time YOLOv8 inference with TensorRT acceleration

- 02 BoT-SORT multi-object tracking with persistent IDs (300-frame memory)

- 03 Dual-model detection: primary + COCO classes simultaneously

- 04 Auto-detect armed persons via weapon-person proximity

- 05 Adjustable confidence threshold (live slider)

Classification & Labeling

- 01 Tag detections: THREAT / FRIENDLY / NEUTRAL / UNKNOWN

- 02 Correct misclassifications in real-time from the UI

- 03 Labels persist across restarts

- 04 Identity reassignment when track IDs change

- 05 Audio alerts on new threat detection

Targeting & Engagement

- 01 Crosshair overlay on designated targets

- 02 Multi-target priority queue with auto-designation

- 03 Autonomous target following (UGV steering)

- 04 E-STOP: spacebar or button, instant disengage

- 05 RC override always available—operator stays in control

Field Retraining

- 01 Auto-save labeled data on every correction

- 02 One-click retrain from the web UI

- 03 Custom class list grows as operators add corrections

- 04 Import external datasets (VisDrone, DOTA, xView)

- 05 Batch annotation review and quality control

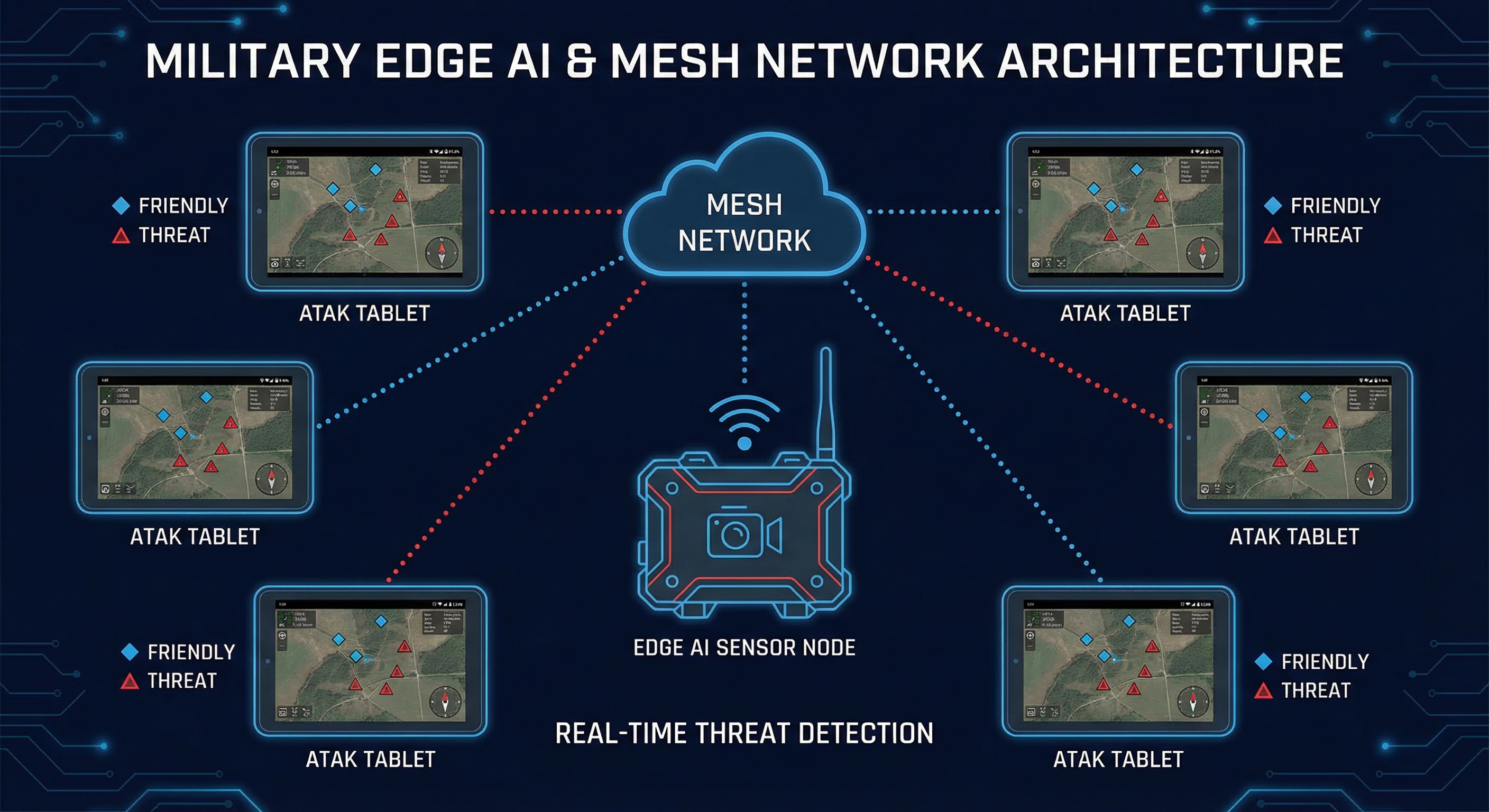

Detections on every screen, instantly

RAPTOR pushes Cursor on Target (CoT) events directly into the TAK ecosystem. Every threat appears on every operator's ATAK display in real time—no manual reporting, no radio calls, no delay.

Auto-Populate SA

Detected threats, vehicles, and persons of interest appear as CoT markers on ATAK maps. Classified as hostile, friendly, neutral, or unknown with confidence scores.

Works Disconnected

RAPTOR pushes CoT over local UDP—no internet required. Works with any TAK Server on the local mesh, including OpenTAKServer running on a companion device.

Multi-Node Coverage

Deploy three RAPTOR nodes around a compound. Each pushes detections to TAK independently. Operators see a unified threat picture from all sensors on a single map.

How RAPTOR compares

| System | Cost | Edge Native | Field Retrainable | Platform Agnostic |

|---|---|---|---|---|

| RAPTOR | $250 compute | ✓ | ✓ | ✓ |

| Anduril Lattice | $millions | ✓ | ✗ | ✗ |

| Shield AI | $100K+/unit | ✓ | ✗ | ✗ |

| Skydio | $10K+/drone | ✓ | ✗ | ✗ |

| Palantir AIP | $5M+/yr | ✗ | ✗ | N/A |

Technical details

Compute

- NVIDIA Jetson Orin Nano / Orin NX

- 8GB–16GB unified memory

- JetPack 6 with CUDA + TensorRT

- 60g module weight

Performance

- 15+ FPS (detection + tracking)

- 30+ FPS with TensorRT

- <30ms inference latency

- 300-frame track persistence

Interface

- Web UI (any device on network)

- MJPEG annotated video stream

- MAVLink vehicle control

- systemd service (boot-ready)

What's coming

ATAK / CoT Integration

Push detections as Cursor on Target messages into the TAK ecosystem via UDP. Threat detections auto-appear on every ATAK device in the network—full battlefield integration with zero manual reporting.

Multi-Source Video Input

RTSP IP cameras, video files, and live webcam feeds. Drag-and-drop video upload from the web UI. Switch sources without restarting. Test and validate with recorded footage.

Automated Evidence Recording

Auto-record video clips when threats are detected. Timestamped incident recordings stored locally, downloadable from the web UI. Full chain-of-custody for after-action review.

TensorRT Optimization

Hardware-accelerated inference using NVIDIA TensorRT. 3–5× FPS improvement over PyTorch, enabling 30+ FPS real-time detection with full tracking on the $250 Jetson module.

Ceradon Sim Integration

Virtual testbench with ArduPilot SITL and Gazebo 3D. Test RAPTOR + UGV control in simulated environments before deploying to real hardware. Sim-to-real parameter export.

Gimbal Control

Slew-to-cue: automatically point camera at detected threats. SIYI, Gremsy, and MAVLink gimbal protocol support.

NanoTrack Terminal Guidance

Single-object tracker optimized for FPV terminal guidance. 60 FPS on Jetson. Operator locks target, AI maintains track through occlusion, scale change, and motion blur.

Thermal / IR Camera

FLIR Lepton, Seek Thermal, and FLIR Boson integration for 24/7 night operations.

Multi-Node Mesh

Coordinate multiple RAPTOR nodes across a perimeter. Shared detection data, deconfliction, and distributed tracking over BATMAN mesh networking.

Multi-Sensor Fusion

Combine RAPTOR (visual), Vantage (WiFi CSI through-wall), and RF detection (RTL-SDR) into a single $300 multi-phenomenology sensor node. Nobody else offers this at any price.

GPS-Tagged Detections

Lat/lon/alt for every detection via MAVLink telemetry. Geofenced alert zones and geospatial overlay.

Ready to deploy intelligent vision?

Whether you're integrating AI onto a UGV, adding autonomy to FPV platforms, or building a perimeter security system—RAPTOR gives you field-retrainable AI at a price point that makes sense.